Log.Printf("isTCP: %v, header: %s", header.Protocol = 6, header)įunc runBin(bin string, args. Log.Printf("tun interface: %s", iface.Name()) I use TUN interface, so only plain IP packet, no ethernet header + mtu is set to 1300 I feel like i'm missing something extremely obvious but I can't figure it for the life of me. I have tried songgao/water, pkg/tuntap and even writing my own based on some C code floating around but no matter what I tried, I can't receive TCP packets (ICMP/UDP works fine).

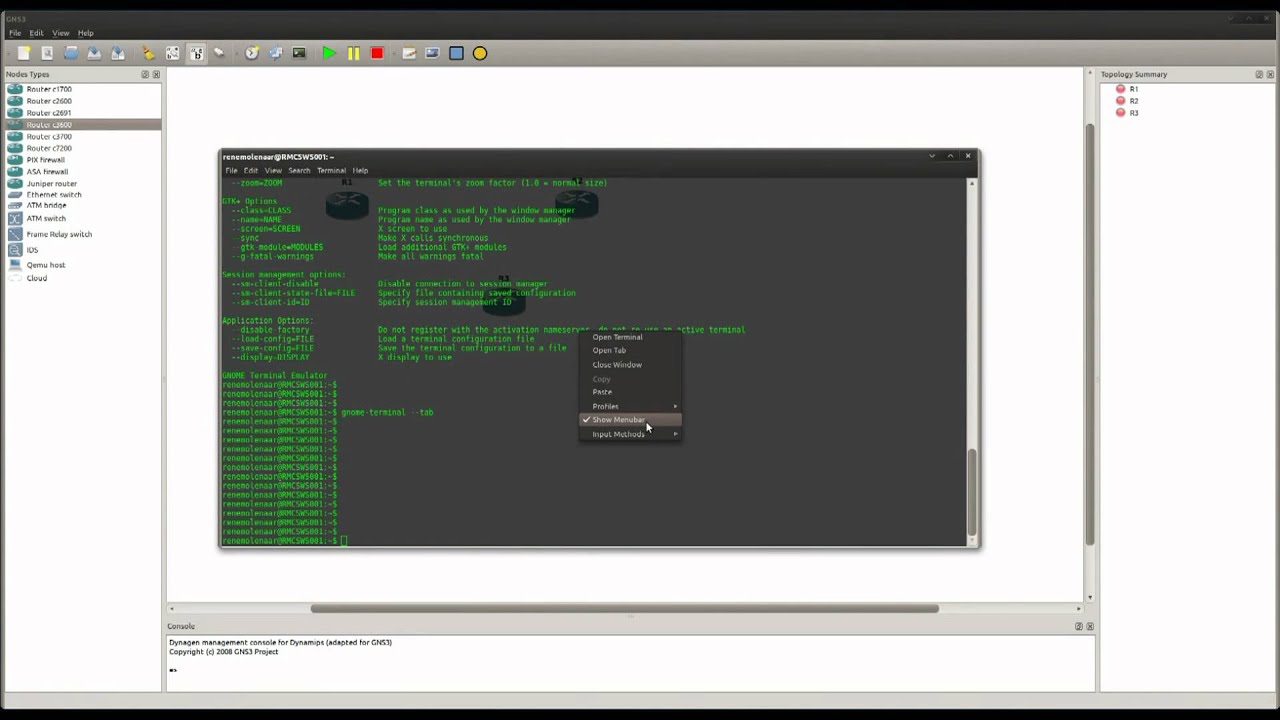

I'm working on a simple project that listens on a tun interface and modified the packets then re-sends them to the real interface. I am using the OSPF routing protocol in R1 and R2. The cloud in the topology represents the connection with the Ubuntu VM host. The transmit window (proportional to throughput) again gently ramps up around 810 KB until there's another packet loss. The network in GNS3 has two routers with this topology: I am using the virtual bridge interface, virbr0:192.168.122.1, to connect the two routers R1 and R2 with the VM Ubuntu. Instead, the sender reduces the transmit window to about 780 KB. Interestingly, this packet loss event does not cause a "halving" of the transmit window. The sender immediately retransmits that data in #5083. One RTT after that, SACK #5082 reports that #5042 was received and now only 1388 bytes are missing. We see SACK #5040 report that 2776 bytes are missing, #5042 is a retransmission of 1388 bytes. The result is that your throughput more than halves at that point.Ī short time later, there is another loss event. However, the loss of those 4 small packets causes the sender to reduce the transmit window to just 850 KB (620 packets x 1400 bytes) per round trip. In your case, the sender's transmit window initially ramps up to 2MB (1482 packets x 1400 MSS) per RT. The first set of SACKs start at #3458 and the first set of retransmitted packets are #3569, #3570, #3571 & #3572. The retransmitted packets are the correct size in this capture (ie, not the very large ones due to being captured before the IP layer "packetised" them). You will then see the retransmissions of the data that the SACKs reported as being lost. There can be multiple left and right edges, indicating multiple "gaps" in the flow. If you delve into the packet detail of the Dup-ACKs, you'll see the "left edge" and "right edge" values that identify them as SACKs. In this case you have to find the Selective ACKs - which are shown as "Dup-Acks" in Wireshark. This is clearly visible, several times, in blue on the Wireshark chart (Statistics - TCP Stream Graphs - Window Scaling).Īn excellent presentation about Congestion Avoidance algorithms was made by Vladimir Gerasimov at SharkFest 2019 did a lot of valuable research and preparation. When there are multiple packet loss events, a chart of the "Bytes-in-Flight" value forms a sawtooth pattern with fast decreases and slow increases. The subsequent "gentle" ramp up (usually by one packet per RT) means that overall throughput suffers severely. When a sender detects packet loss it is supposed to "halve" its transmit window and then ramp up very slowly.Ī halving of bytes-per-round-trip or packets-per-RT results in a halving of throughput. It is some relatively small packet losses at various times which trigger the TCP "Congestion Avoidance" mechanism/algorithm. However, the reason for the slow transfer is actually quite basic and common. That is, missing from the trace file but were there in real life. Your original "AIX-to-Cloud" capture file is made more difficult to analyse due to the fact that there are a lot of packets that weren't captured.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed